Fashion MNIST¶

This guide is a copy of Tensorflow’s tutorial Basic classification: Classify images of clothing. It does NOT use a complex database. It just serves to test the correct work of the CVNN layers and compare it to a known working example.

It trains a neural network model to classify images of clothing, like sneakers and shirts. It’s okay if you don’t understand all the details; this is a fast-paced overview of a complete TensorFlow program with the details explained as you go.

This guide uses tf.keras, a high-level API to build and train models in TensorFlow.

[1]:

# TensorFlow and tf.keras

import tensorflow as tf

# Helper libraries

import numpy as np

import matplotlib.pyplot as plt

# My library!

from cvnn import layers

print(tf.__version__)

2.1.0

Import the Fashion MNIST dataset¶

This guide uses the Fashion MNIST dataset which contains 70,000 grayscale images in 10 categories. The images show individual articles of clothing at low resolution (28 by 28 pixels), as seen here:

![]()

Fashion MNIST is intended as a drop-in replacement for the classic MNIST dataset—often used as the “Hello, World” of machine learning programs for computer vision. The MNIST dataset contains images of handwritten digits (0, 1, 2, etc.) in a format identical to that of the articles of clothing you’ll use here.

This guide uses Fashion MNIST for variety, and because it’s a slightly more challenging problem than regular MNIST. Both datasets are relatively small and are used to verify that an algorithm works as expected. They’re good starting points to test and debug code.

Here, 60,000 images are used to train the network and 10,000 images to evaluate how accurately the network learned to classify images. You can access the Fashion MNIST directly from TensorFlow. Import and load the Fashion MNIST data directly from TensorFlow:

[2]:

fashion_mnist = tf.keras.datasets.fashion_mnist

(train_images, train_labels), (test_images, test_labels) = fashion_mnist.load_data()

Loading the dataset returns four NumPy arrays:

- The

train_imagesandtrain_labelsarrays are the training set—the data the model uses to learn. - The model is tested against the test set, the

test_images, andtest_labelsarrays.

The images are 28x28 NumPy arrays, with pixel values ranging from 0 to 255. The labels are an array of integers, ranging from 0 to 9. These correspond to the class of clothing the image represents:

| Label | Class |

|---|---|

| 0 | T-shirt/top |

| 1 | Trouser |

| 2 | Pullover |

| 3 | Dress |

| 4 | Coat |

| 5 | Sandal |

| 6 | Shirt |

| 7 | Sneaker |

| 8 | Bag |

| 9 | Ankle boot |

Each image is mapped to a single label. Since the class names are not included with the dataset, store them here to use later when plotting the images:

[3]:

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

Explore the data¶

Let’s explore the format of the dataset before training the model. The following shows there are 60,000 images in the training set, with each image represented as 28 x 28 pixels:

[4]:

train_images.shape

[4]:

(60000, 28, 28)

Likewise, there are 60,000 labels in the training set:

[5]:

len(train_labels)

[5]:

60000

Each label is an integer between 0 and 9:

[6]:

train_labels

[6]:

array([9, 0, 0, ..., 3, 0, 5], dtype=uint8)

There are 10,000 images in the test set. Again, each image is represented as 28 x 28 pixels:

[7]:

test_images.shape

[7]:

(10000, 28, 28)

And the test set contains 10,000 images labels:

[8]:

len(test_labels)

[8]:

10000

Preprocess the data¶

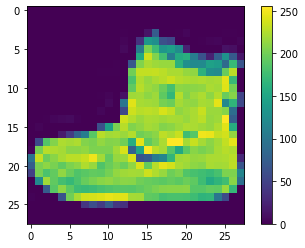

The data must be preprocessed before training the network. If you inspect the first image in the training set, you will see that the pixel values fall in the range of 0 to 255:

[9]:

plt.figure()

plt.imshow(train_images[0])

plt.colorbar()

plt.grid(False)

plt.show()

Scale these values to a range of 0 to 1 before feeding them to the neural network model. To do so, divide the values by 255. It’s important that the training set and the testing set be preprocessed in the same way:

[10]:

train_images = train_images / 255.0

test_images = test_images / 255.0

To verify that the data is in the correct format and that you’re ready to build and train the network, let’s display the first 25 images from the training set and display the class name below each image.

[11]:

plt.figure(figsize=(10,10))

for i in range(25):

plt.subplot(5,5,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(train_images[i], cmap=plt.cm.binary)

plt.xlabel(class_names[train_labels[i]])

plt.show()

Build the model¶

Building the neural network requires configuring the layers of the model, then compiling the model.

Set up the layers¶

The basic building block of a neural network is the layer. Layers extract representations from the data fed into them. Hopefully, these representations are meaningful for the problem at hand.

Most of deep learning consists of chaining together simple layers. Most layers, such as tf.keras.layers.Dense, have parameters that are learned during training.

[12]:

model = tf.keras.Sequential([

layers.ComplexFlatten(input_shape=(28, 28)),

layers.ComplexDense(128, activation='cart_relu', dtype=np.float32),

layers.ComplexDense(10, dtype=np.float32)

])

As this CVNN library goal is to work with complex-valued datasets, ComplexLayer’s have np.complex64 dtype by default. Therefore, for all layers we should add the parameter dtype=np.float32 (our database is real)

The first layer in this network, layers.ComplexFlatten, transforms the format of the images from a two-dimensional array (of 28 by 28 pixels) to a one-dimensional array (of 28 * 28 = 784 pixels). Think of this layer as unstacking rows of pixels in the image and lining them up. This layer has no parameters to learn; it only reformats the data.

After the pixels are flattened, the network consists of a sequence of two layers.ComplexDense layers. These are densely connected, or fully connected, neural layers. The first Dense layer has 128 nodes (or neurons). The second (and last) layer returns a logits array with length of 10. Each node contains a score that indicates the current image belongs to one of the 10 classes.

Compile the model¶

Before the model is ready for training, it needs a few more settings. These are added during the model’s compile step:

- Loss function —This measures how accurate the model is during training. You want to minimize this function to “steer” the model in the right direction.

- Optimizer —This is how the model is updated based on the data it sees and its loss function.

- Metrics —Used to monitor the training and testing steps. The following example uses accuracy, the fraction of the images that are correctly classified.

[13]:

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

Train the model¶

Training the neural network model requires the following steps:

- Feed the training data to the model. In this example, the training data is in the train_images and train_labels arrays.

- The model learns to associate images and labels.

- You ask the model to make predictions about a test set—in this example, the test_images array.

- Verify that the predictions match the labels from the test_labels array.

Feed the model¶

To start training, call the model.fit method—so called because it “fits” the model to the training data:

[14]:

model.fit(train_images, train_labels, epochs=10)

Train on 60000 samples

Epoch 1/10

32/60000 [..............................] - ETA: 28:33

---------------------------------------------------------------------------

InternalError Traceback (most recent call last)

<ipython-input-14-93ea666c821d> in <module>

----> 1 model.fit(train_images, train_labels, epochs=10)

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\keras\engine\training.py in fit(self, x, y, batch_size, epochs, verbose, callbacks, validation_split, validation_data, shuffle, class_weight, sample_weight, initial_epoch, steps_per_epoch, validation_steps, validation_freq, max_queue_size, workers, use_multiprocessing, **kwargs)

817 max_queue_size=max_queue_size,

818 workers=workers,

--> 819 use_multiprocessing=use_multiprocessing)

820

821 def evaluate(self,

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\keras\engine\training_v2.py in fit(self, model, x, y, batch_size, epochs, verbose, callbacks, validation_split, validation_data, shuffle, class_weight, sample_weight, initial_epoch, steps_per_epoch, validation_steps, validation_freq, max_queue_size, workers, use_multiprocessing, **kwargs)

340 mode=ModeKeys.TRAIN,

341 training_context=training_context,

--> 342 total_epochs=epochs)

343 cbks.make_logs(model, epoch_logs, training_result, ModeKeys.TRAIN)

344

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\keras\engine\training_v2.py in run_one_epoch(model, iterator, execution_function, dataset_size, batch_size, strategy, steps_per_epoch, num_samples, mode, training_context, total_epochs)

126 step=step, mode=mode, size=current_batch_size) as batch_logs:

127 try:

--> 128 batch_outs = execution_function(iterator)

129 except (StopIteration, errors.OutOfRangeError):

130 # TODO(kaftan): File bug about tf function and errors.OutOfRangeError?

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\keras\engine\training_v2_utils.py in execution_function(input_fn)

96 # `numpy` translates Tensors to values in Eager mode.

97 return nest.map_structure(_non_none_constant_value,

---> 98 distributed_function(input_fn))

99

100 return execution_function

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\def_function.py in __call__(self, *args, **kwds)

566 xla_context.Exit()

567 else:

--> 568 result = self._call(*args, **kwds)

569

570 if tracing_count == self._get_tracing_count():

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\def_function.py in _call(self, *args, **kwds)

630 # Lifting succeeded, so variables are initialized and we can run the

631 # stateless function.

--> 632 return self._stateless_fn(*args, **kwds)

633 else:

634 canon_args, canon_kwds = \

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\function.py in __call__(self, *args, **kwargs)

2361 with self._lock:

2362 graph_function, args, kwargs = self._maybe_define_function(args, kwargs)

-> 2363 return graph_function._filtered_call(args, kwargs) # pylint: disable=protected-access

2364

2365 @property

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\function.py in _filtered_call(self, args, kwargs)

1609 if isinstance(t, (ops.Tensor,

1610 resource_variable_ops.BaseResourceVariable))),

-> 1611 self.captured_inputs)

1612

1613 def _call_flat(self, args, captured_inputs, cancellation_manager=None):

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\function.py in _call_flat(self, args, captured_inputs, cancellation_manager)

1690 # No tape is watching; skip to running the function.

1691 return self._build_call_outputs(self._inference_function.call(

-> 1692 ctx, args, cancellation_manager=cancellation_manager))

1693 forward_backward = self._select_forward_and_backward_functions(

1694 args,

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\function.py in call(self, ctx, args, cancellation_manager)

543 inputs=args,

544 attrs=("executor_type", executor_type, "config_proto", config),

--> 545 ctx=ctx)

546 else:

547 outputs = execute.execute_with_cancellation(

~\.conda\envs\tf-2-gpu\lib\site-packages\tensorflow_core\python\eager\execute.py in quick_execute(op_name, num_outputs, inputs, attrs, ctx, name)

65 else:

66 message = e.message

---> 67 six.raise_from(core._status_to_exception(e.code, message), None)

68 except TypeError as e:

69 keras_symbolic_tensors = [

~\.conda\envs\tf-2-gpu\lib\site-packages\six.py in raise_from(value, from_value)

InternalError: Blas GEMM launch failed : a.shape=(32, 784), b.shape=(784, 128), m=32, n=128, k=784

[[node sequential/complex_dense/MatMul (defined at W:\HardDiskDrive\Documentos\GitHub\cvnn\cvnn\layers.py:127) ]] [Op:__inference_distributed_function_668]

Errors may have originated from an input operation.

Input Source operations connected to node sequential/complex_dense/MatMul:

sequential/complex_flatten/Cast (defined at W:\HardDiskDrive\Documentos\GitHub\cvnn\cvnn\layers.py:64)

Function call stack:

distributed_function

As the model trains, the loss and accuracy metrics are displayed. This model reaches an accuracy of about 0.91 (or 91%) on the training data.

Evaluate accuracy¶

Next, compare how the model performs on the test dataset:

[ ]:

test_loss, test_acc = model.evaluate(test_images, test_labels, verbose=2)

print('\nTest accuracy:', test_acc)

It turns out that the accuracy on the test dataset is a little less than the accuracy on the training dataset. This gap between training accuracy and test accuracy represents overfitting. Overfitting happens when a machine learning model performs worse on new, previously unseen inputs than it does on the training data. An overfitted model “memorizes” the noise and details in the training dataset to a point where it negatively impacts the performance of the model on the new data. For more information, see the following:

Make predictions¶

With the model trained, you can use it to make predictions about some images. The model’s linear outputs, logits. Attach a softmax layer to convert the logits to probabilities, which are easier to interpret.

[ ]:

probability_model = tf.keras.Sequential([model, tf.keras.layers.Softmax()])

predictions = probability_model.predict(test_images)

Here, the model has predicted the label for each image in the testing set. Let’s take a look at the first prediction:

[ ]:

predictions[0]

A prediction is an array of 10 numbers. They represent the model’s “confidence” that the image corresponds to each of the 10 different articles of clothing. You can see which label has the highest confidence value:

[ ]:

np.argmax(predictions[0])

So, the model is most confident that this image is an ankle boot, or class_names[9]. Examining the test label shows that this classification is correct:

[ ]:

test_labels[0]

Graph this to look at the full set of 10 class predictions.

[1]:

def plot_image(i, predictions_array, true_label, img):

true_label, img = true_label[i], img[i]

plt.grid(False)

plt.xticks([])

plt.yticks([])

plt.imshow(img, cmap=plt.cm.binary)

predicted_label = np.argmax(predictions_array)

if predicted_label == true_label:

color = 'blue'

else:

color = 'red'

plt.xlabel("{} {:2.0f}% ({})".format(class_names[predicted_label],

100*np.max(predictions_array),

class_names[true_label]),

color=color)

def plot_value_array(i, predictions_array, true_label):

true_label = true_label[i]

plt.grid(False)

plt.xticks(range(10))

plt.yticks([])

thisplot = plt.bar(range(10), predictions_array, color="#777777")

plt.ylim([0, 1])

predicted_label = np.argmax(predictions_array)

thisplot[predicted_label].set_color('red')

thisplot[true_label].set_color('blue')

Verify predictions¶

With the model trained, you can use it to make predictions about some images.

Let’s look at the 0th image, predictions, and prediction array. Correct prediction labels are blue and incorrect prediction labels are red. The number gives the percentage (out of 100) for the predicted label.

[ ]:

i = 0

plt.figure(figsize=(6,3))

plt.subplot(1,2,1)

plot_image(i, predictions[i], test_labels, test_images)

plt.subplot(1,2,2)

plot_value_array(i, predictions[i], test_labels)

plt.show()

[ ]:

i = 12

plt.figure(figsize=(6,3))

plt.subplot(1,2,1)

plot_image(i, predictions[i], test_labels, test_images)

plt.subplot(1,2,2)

plot_value_array(i, predictions[i], test_labels)

plt.show()

Let’s plot several images with their predictions. Note that the model can be wrong even when very confident.

[ ]:

# Plot the first X test images, their predicted labels, and the true labels.

# Color correct predictions in blue and incorrect predictions in red.

num_rows = 5

num_cols = 3

num_images = num_rows*num_cols

plt.figure(figsize=(2*2*num_cols, 2*num_rows))

for i in range(num_images):

plt.subplot(num_rows, 2*num_cols, 2*i+1)

plot_image(i, predictions[i], test_labels, test_images)

plt.subplot(num_rows, 2*num_cols, 2*i+2)

plot_value_array(i, predictions[i], test_labels)

plt.tight_layout()

plt.show()

Use the trained model¶

Finally, use the trained model to make a prediction about a single image.

[ ]:

# Grab an image from the test dataset.

img = test_images[1]

print(img.shape)

tf.keras models are optimized to make predictions on a batch, or collection, of examples at once. Accordingly, even though you’re using a single image, you need to add it to a list:

[ ]:

# Add the image to a batch where it's the only member.

img = (np.expand_dims(img,0))

print(img.shape)

Now predict the correct label for this image:

[ ]:

predictions_single = probability_model.predict(img)

print(predictions_single)

[ ]:

plot_value_array(1, predictions_single[0], test_labels)

_ = plt.xticks(range(10), class_names, rotation=45)

tf.keras.Model.predict returns a list of lists—one list for each image in the batch of data. Grab the predictions for our (only) image in the batch:

[ ]:

np.argmax(predictions_single[0])

[ ]: